Q-Diffusion: Quantizing Diffusion Models

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

ICCV 2023

|

|

|

Abstract

Diffusion models have achieved great success in image synthesis through iterative noise estimation using deep neural networks. However, the slow inference, high memory consumption, and computation intensity of the noise estimation model hinder the efficient adoption of diffusion models. Although post-training quantization (PTQ) is considered a go-to compression method for other tasks, it does not work out-of-the-box on diffusion models. We propose a novel PTQ method specifically tailored towards the unique multi-timestep pipeline and model architecture of the diffusion models, which compresses the noise estimation network to accelerate the generation process. We identify the key difficulty of diffusion model quantization as the changing output distributions of noise estimation networks over multiple time steps and the bimodal activation distribution of the shortcut layers within the noise estimation network. We tackle these challenges with time step-aware calibration and shortcut-splitting quantization in this work. Experimental results show that our proposed method is able to quantize full-precision unconditional diffusion models into 4-bit while maintaining comparable performance (small FID change of at most 2.34 compared to >100 for traditional PTQ) in a training-free manner. Our approach can also be applied to text-guided image generation, where we can run stable diffusion in 4-bit weights with high generation quality for the first time.

Time Step-aware Calibration Data Sampling

Shortcut-splitting Quantization

Results: Unconditional Generation

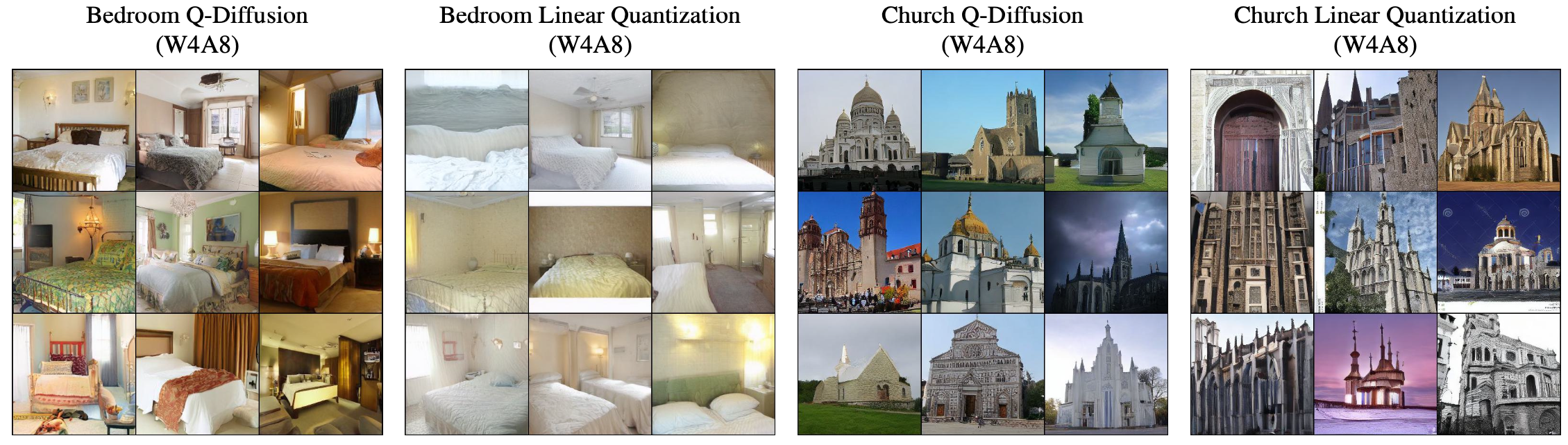

LSUN results using Q-Diffusion and Linear Quantization (round-to-nearest), Latent Diffusion

Results: Text-guided Image Generation

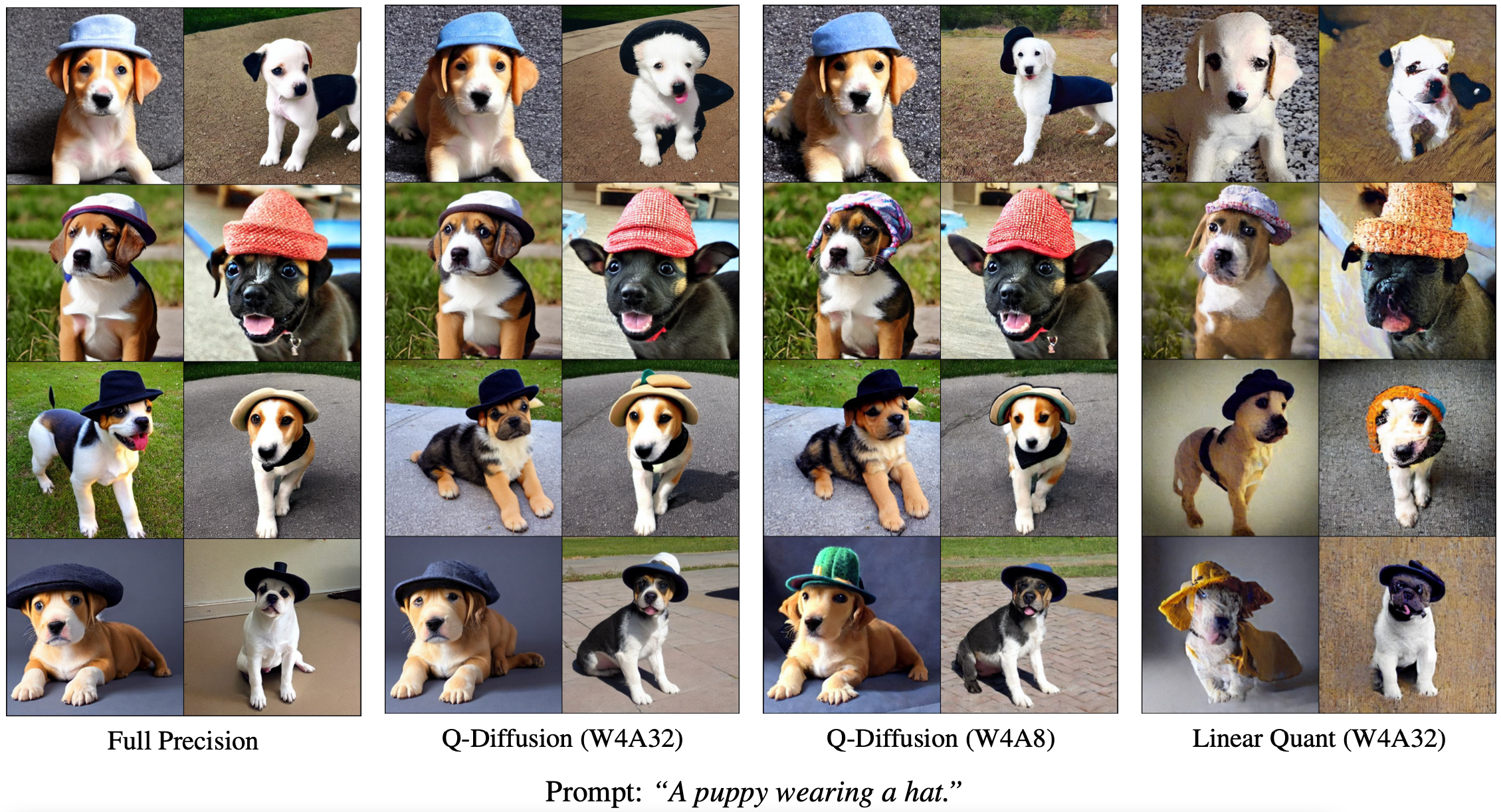

txt2img results using Q-Diffusion and Linear Quantization (round-to-nearest), Stable Diffusion v1.4

Paper

|

X. Li, Y. Liu, L. Lian, H. Yang, Z. Dong, D. Kang, S. Zhang, and K. Keutzer Q-Diffusion: Quantizing Diffusion Models arXiv:2302.04304, 2023. |

Citation

@InProceedings{li2023qdiffusion,

author={Li, Xiuyu and Liu, Yijiang and Lian, Long and Yang, Huanrui and Dong, Zhen and Kang, Daniel and Zhang, Shanghang and Keutzer, Kurt},

title={Q-Diffusion: Quantizing Diffusion Models},

booktitle={Proceedings of the IEEE/CVF International Conference on Computer Vision (ICCV)},

month={October},

year={2023},

pages={17535-17545}

}

Acknowledgements

We thank Berkeley Deep Drive, Intel Corporation, Panasonic, and NVIDIA for supporting this research. We also would like to thank Sehoon Kim, Muyang Li, and Minkai Xu for their valuable feedback. The design of this project page template references the project pages of Denoised MDPs and a colorful ECCV project.